Nutanix Enterprise AI provides an easy-to-use, unified generative AI experience on-premises, at the edge and now in public clouds

Nutanix extended the company’s AI infrastructure platform with a new cloud native offering, Nutanix Enterprise AI (NAI), that can be deployed on any Kubernetes platform, at the edge, in core data centers and on public cloud services like AWS EKS, Azure AKS, and Google GKE. The NAI offering delivers a consistent hybrid multicloud operating model for accelerated AI workloads, enabling organizations to leverage their models and data in a secure location of their choice while improving return on investment (ROI). Leveraging NVIDIA NIM for optimized performance of foundation models, Nutanix Enterprise AI helps organizations securely deploy, run and scale inference endpoints for large language models (LLMs) to support the deployment of generative AI (GenAI) applications in minutes, not days or weeks.

Generative AI is an inherently hybrid workload, with new applications often built in the public cloud, fine-tuning of models using private data occurring on-premises, and inferencing deployed closest to the business logic, which could be at the edge, on-premises or in the public cloud. This distributed hybrid GenAI workflow can present challenges for organizations concerned about complexity, data privacy, security, and cost.

Nutanix Enterprise AI provides a consistent multi-cloud operating model and a simple way to securely deploy, scale and run LLMs with NVIDIA NIM optimized inference microservices as well as open foundation models from Hugging Face. This enables customers to stand up enterprise GenAI infrastructure with the resiliency, day 2 operations, and security they require for business-critical applications, on-premises or on AWS Elastic Kubernetes Service (EKS), Azure Managed Kubernetes Service (AKS), and Google Kubernetes Engine (GKE).

Additionally, Nutanix Enterprise AI delivers a transparent and predictable pricing model based on infrastructure resources, which is important for customers looking to maximize ROI from their GenAI investments. This is in contrast to hard-to-predict usage or token-based pricing.

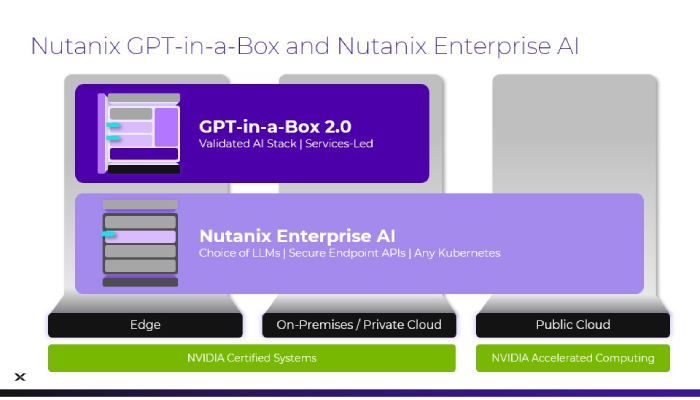

Nutanix Enterprise AI is a component of Nutanix GPT-in-a-Box 2.0. GPT-in-a-Box also includes Nutanix Cloud Infrastructure, Nutanix Kubernetes Platform and Nutanix Unified Storage along with services to support customer configuration and sizing needs for on-premises training and inferencing. For customers looking to deploy in public cloud, Nutanix Enterprise AI can be deployed in any Kubernetes environment but is operationally consistent with on-premises deployments.

Nutanix Enterprise AI can be deployed with the NVIDIA full-stack AI platform and is validated with the NVIDIA AI Enterprise software platform, including NVIDIA NIM, a set of easy-to-use microservices designed for secure, reliable deployment of high-performance AI model inferencing. Nutanix-GPT-in-a-Box is also an NVIDIA-Certified System, also ensuring reliability of performance.

“Generative AI workloads are inherently hybrid, with training, customization, and inference occurring across public clouds, on-premises systems, and edge locations,” said Justin Boitano, vice president of enterprise AI at NVIDIA. “Integrating NVIDIA NIM into Nutanix Enterprise AI provides a consistent multicloud model with secure APIs, enabling customers to deploy AI across diverse environments with the high performance and security needed for business-critical applications.”

Key use cases for customers leveraging Nutanix Enterprise AI include: enhancing customer experience with GenAI through analysis of customer feedback and documents; accelerating code and content creation by leveraging co-pilots and intelligent document processing; leveraging fine-tuning models on domain-specific data to accelerate code and content generation; strengthening security, including leveraging AI models for fraud detection, threat detection, alert enrichment, and automatic policy creation; and improving analytics by leveraging fine-tuned models on private data.